In 1086, following a great political convulsion throughout England, William the Conqueror commissioned one of the largest data gatherings performed up to that day. So painstakingly tedious were the Royal Commissioners in collecting all information related to the number of persons, land, and goods that the Anglo-Saxon Chronicle recorded, “there was not one single hide, nor a yard of land, nay, moreover...not even an ox, nor a cow, nor a swine was there left, that was not set down in his writ.”

In 1086, following a great political convulsion throughout England, William the Conqueror commissioned one of the largest data gatherings performed up to that day. So painstakingly tedious were the Royal Commissioners in collecting all information related to the number of persons, land, and goods that the Anglo-Saxon Chronicle recorded, “there was not one single hide, nor a yard of land, nay, moreover...not even an ox, nor a cow, nor a swine was there left, that was not set down in his writ.”

The aim of this massive undertaking, of course, was to make sure that all taxes, dues, and proceeds were being collected, and to have an authoritative “database” by which the State, or Crown, could enforce its fiscal rights to land and wealth.

Given its comprehensive detail and supreme authority, this megafile of information became known as the “Domesday” Book (today: “Doomsday”), named after the biblical Day of Judgment when everyone's life is laid bare.

Fast forward to today, and we see governments are still up to their dirty tricks. Although now, instead of manually collecting all our data by hand in one large book, it is done automatically in a vast computational network of corporations and governmental agencies storing, tracking, and modeling society-at-large.

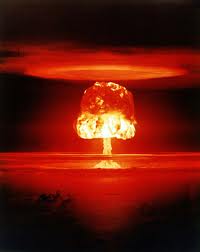

Interestingly, for most, the idea of "Doomsday" only involves worldwide destruction. With the advent of the hydrogen bomb and its terrifying explosive power, this image became solidified and displaced all other considerations where now, when we speak of a doomsday machine or device, there are few who trace its origins back to the relevatory detail of one's life.

Interestingly, for most, the idea of "Doomsday" only involves worldwide destruction. With the advent of the hydrogen bomb and its terrifying explosive power, this image became solidified and displaced all other considerations where now, when we speak of a doomsday machine or device, there are few who trace its origins back to the relevatory detail of one's life.

Yet, in reality, the power inherent to a doomsday machine does not lie in a massive thermonuclear explosion. The true power lies in what creates it. As John von Neumann, who helped create the first hydrogen weapon, Ivy Mike, said, “I am thinking about something much more important than bombs. I am thinking about computers.”

As George Dyson recounts in his recent book, Turing’s Cathedral, “Computers were essential to the initiation of nuclear explosions, and to understanding what happens next.” More poetically, he writes, “Numerical simulation of [nuclear] chain reactions within computers initiated a chain reaction among computers, with machines and codes proliferating as explosively as the phenomena they were designed to help us understand. It is no coincidence that the most destructive and the most constructive of human inventions appeared at exactly the same time.”

As George Dyson recounts in his recent book, Turing’s Cathedral, “Computers were essential to the initiation of nuclear explosions, and to understanding what happens next.” More poetically, he writes, “Numerical simulation of [nuclear] chain reactions within computers initiated a chain reaction among computers, with machines and codes proliferating as explosively as the phenomena they were designed to help us understand. It is no coincidence that the most destructive and the most constructive of human inventions appeared at exactly the same time.”

This bring us to today, where another explosion—a Big Bang, if you will—of such magnitude has occurred that we can only describe it using the same vague language we use to describe the birth of our universe: "Big Data".

The runaway explosion of computational power coupled with unimaginable data has yielded a scale of intelligence that is beyond human. Consider some points from Viktor Mayer-Schonberger and Kenneth Cukier’s recent book, “Big Data: A Revolution That Will Transform How We Live, Work, and Think”:

- By processing 50 million of the most common search terms through 450 million different mathematical models, Google was able to predict the spread of the H1N1 flu virus in near real-time, far quicker than the CDC using reports provided from doctors.

- Using modern computation it took a decade to finally decode the human genome. Now, ten years later, using Big Data techniques, it takes a single day.

- “Google processes more than 24 petabytes of data per day, a volume that is thousands of times the quantity of all printed material in the U.S. Library of Congress.”

- If all the digital information in the world were printed on books, it would “cover the entire surface of the United States some 52 layers thick. If it were placed on CD-ROMs and stacked up, they would stretch to the moon in five separate piles.”

- Since the invention of the Gutenberg printing press, it took 50 years for the amount of information to double. This now occurs roughly every three years.

- Using Big Data, the Obama campaign simulated the election 66,000 times every night using characteristics like age, sex, race, neighborhood, voting record, and consumer data to predict and guarantee the results ahead of time.

- MIT scientists automated the process of tracking inflation in real-time using software tracking half a million products online. It takes the BLS hundreds of staff, costs 0 million per year, and suffers a delay of a few weeks.

- By tracking 1,260 data points per second related to heart rate, respiration rate, temperature, blood pressure, and so on, Dr. Carolyn McGregor found that the vital signs of premature babies actually stabilize, not deteriorate, right before a major infection. Not only was this never known, but it defied common wisdom.

- Using Big Data on credit scores and a wealth of other variables, FICO’s CEO boasted in 2011, “We know what you’re going to do tomorrow.”

The number of astonishing changes and developments arising due to big data are just too long to list, which is why I’d highly recommend reading the book listed above to get a grasp of what’s taking place.

Coming back to where we started, consider the following admonition from Mayer-Schonberger and Cukier given the realities of ubiquitous data and computation:

“[A]lgorithms will predict the likelihood that one will get a heart attack (and pay more for health insurance), default on a mortgage (and be denied a loan), or commit a crime (and perhaps get arrested in advance). It leads to an ethical consideration of the role of free will versus the dictatorship of data. Should individual volition trump big data, even if statistics argue otherwise? Just as the printing press prepared the ground for laws guaranteeing free speech—which didn’t exist earlier because there was so little written expression to protect—the age of big data will require new rules to safeguard the sanctity of the individual.”

When faced with the twin creation of the bomb and modern computer, von Neumann woke one night and told his wife, “What we are creating now is a monster whose influence is going to change history, provided there is any history left.” It wasn’t the bomb, however, that worried him most. It was, as Dyson writes, “the growing powers of machines.”

Ironically, what the writers of “Big Data” teach us is that the true power of the machine does not lie inside silicon and microchips, but us. We provide the data it needs to get bigger, smarter, and more powerful. Yet—here’s the most important part—big data isn’t about machines getting smarter, but society processing information at a larger and higher scale than humanly possible. Still, Mayer-Schonberger and Cukier warn, the age we are entering “will require new rules to safeguard the sanctity of the individual.” What sanctity are they referring to?

Today, with the NSA leaks, we now know that the government is actively creating a much more powerful “Doomsday Machine” than we ever imagined. As Edward Snowden warned, “When a new leader is elected, they'll flip the switch, say that because of the crisis, because of the dangers that we face in the world—some new and unpredicted threat—we need more authority; we need more power. And there will be nothing the people can do at that point to oppose it. And it'll be turnkey tyranny."

Edward Snowden was wrong on one point: the “Doomsday Machine” contains no switch, nor can it be turned off. Why? Because we—in the massive current of data flooding the earth—are what power it. To truly get rid of such a “machine” then, would require doomsday of, well, the biblical sort.

Cris Sheridan is Senior Editor of Financial Sense. You can follow him on Twitter: @CrisSheridan

Related: